How to Load Test Your Apps For Free By Going Serverless

Nothing is worse than launching a new product and it promptly goes down because you weren’t able to scale with the demand. On the surface everything looked great. Your QAs ran a full suite of regression tests. You ran through every use case you can think of.

The application was singing. But you didn’t run it at scale. You had 3-5 people in there at once at any given time. That’s not a production load.

As your application’s go-live closes in, you must make sure your app can handle the expected amount of traffic. A load test is a great way to make sure you are prepared come go-live.

Load tests are simulated traffic to your application that exceed the expected usage of your site. If I expected my site to bring in 10,000 requests an hour, I might try load testing it by throwing 30,000 requests in an hour.

I’ve written before on serverless load testing and the load test checklist. Today we’re going to talk about something new. A new load testing mechanism that runs on serverless.

TL;DR - You can get the source for the serverless load tester on GitHub

How It Works

This load testing solution is a small serverless application that runs Postman collections in a Lambda function. The Lambda runs newman, the Postman CLI, and logs metrics from the execution in CloudWatch.

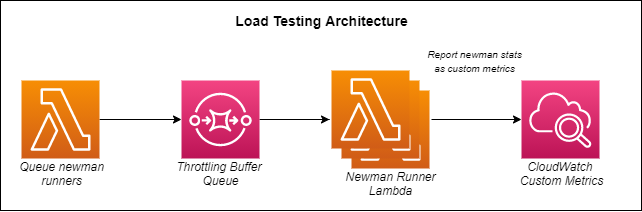

Architecture of the serverless load testing app

A triggering Lambda function will take the configured settings for the load test, create a set of events that represent a single collection run, then drop the events into an SQS queue. Another Lambda watches that queue and pulls the event details, executes the collection run via newman, and records the details.

Serverless Benefits

When you think of serverless, a few things come to mind. “Pay-as-you-go”, “highly-scalable”, and “distributed” are the first things that pop into my head. Thinking about a load testing tool, it seems like a perfect match.

With load testing, getting a horizontally scaled platform is critical. You need to pump a high volume of data into your application and that is exactly what we can do with this serverless load testing app.

To pass in a high volume of requests, we use an event source mapping from an SQS queue to a Lambda. When Lambda sees items in the queue, it scales out automatically to process all of the requests.

A huge benefit of processing events this way is that the event source mapping will throttle itself if you get a little overzealous. Meaning Lambda will not try to be greedy and run more instances than the AWS account allows.

By default, an AWS account allows for 1,000 concurrent Lambda executions. If you drop in a load test that calls for 10,000 runs, the SQS method will make sure Lambda only runs when it has concurrency available.

The load test will be distributed among all availability zones in a region automatically. So you are given a geographically distributed load test out-of-the-box as well.

Load Test Scenarios

When testing to see if your application will perform at scale, you must identify primary business flows. The odds are high that all users of your system will not be performing the same task at the same time.

One set of users might be performing Business Process A, while another set might be doing Business Process B. A third, much smaller set of users might be doing Business Process C.

When done together, the effects on your system could be completely different than if these business processes were done in isolation. Meaning Business Process B could put contention on a different set of resources when Business Process A is being run than when either process is being run by themselves.

So providing load on multiple business processes at at the same time will give us a more realistic view at how the system performs under pressure.

In our serverless load testing tool, we can create a set of distributions to simulate this weighted traffic.

Each business process is represented by a distribution and each distribution contains a Postman collection and a percentage of the traffic to simulate.

In our example above, we could accurately simulate a real world use case by providing the following configuration to our load tester:

{

"count": 10000,

"distributions": [

{

"name": "Business Process A",

"percentage": 60,

"s3CollectionPath": "/collections/businessProcessA"

},

{

"name": "Business Process B",

"percentage": 30,

"s3CollectionPath": "/collections/businessProcessB"

},

{

"name": "Business Process C",

"percentage": 10,

"s3CollectionPath": "/collections/businessProcessC"

}

]

}

With the configuration above, Business Process A will receive 60% of the traffic, Business Process B will receive 30%, and Business Process C will receive 10%.

Since we are running a total of 10,000 collections, that means:

- Business Process A will be run 6,000 times

- Business Process B will be run 3,000 times

- Business Process C will be run 1,000 times

By providing a distribution of business processes across the system, we stress the application differently (and ideally more realistically) than running one at a time.

Burst vs Prolonged Load

The best way someone ever described burst vs prolonged load was with a metaphor about the SuperBowl.

During the game, everyone is sitting down and watching. But as soon as the game pauses at half-time, everyone races to get up and use the bathroom. When they are done, they flush (as we all do). The sudden influx of flushing toilets is known as a burst because it far exceeds the standard amount of flushes. Everyone was holding it and finally had an opportunity to go all at the same time.

The sewer is not used to that many flushes at the same time, but we all better hope it can handle it.

This concept applies to software and the traffic to your site. You have standard every day usage that make up the volume 95% of the time. But there will likely be a few times where you get a sudden surge of traffic and your site better be able to handle it.

With the load tester, we have the option to do both. If you want to see how your application handles a big burst of traffic, the Lambda runners can scale horizontally to up to tens of thousands of collection runs concurrently. You might need to request a quota increase on your concurrent executions first.

You also have the option to run a prolonged load. By changing the reserved concurrency on the Lambda function that executes newman, you can set the max number of instances that run at the same time. This will provide a controlled load into your system over a period of time as it does not allow the Lambda to horizontally scale up to your service limit.

At this time, that must be configured manually in the AWS console prior to running the load test.

Monitoring

You can’t have a load test without monitoring. You must be able to watch your system scale and grow as it works to meet the incoming demand. Luckily, our serverless load testing app has us covered.

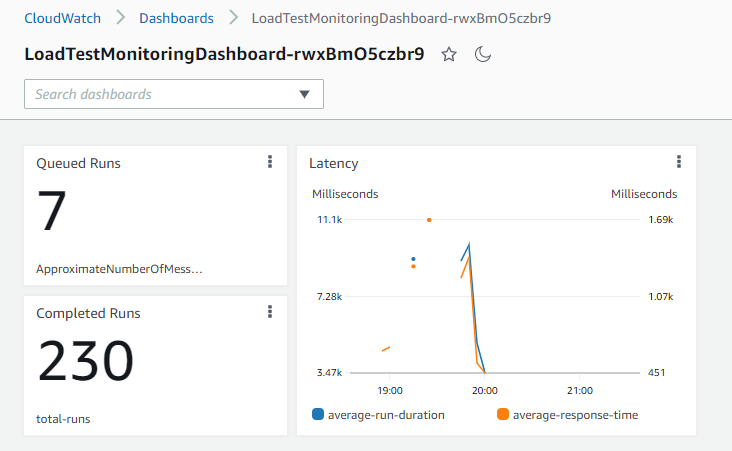

After each newman run, it pushes custom metrics into CloudWatch so you can track total duration, average request latency, amount of assertion failures, etc…

A dashboard is created upon deployment to track the load test as it progresses. You can also view a pie chart of your business process distributions and failures. This will allow you to see if the distributed load is causing failures on one process but not the other.

Note - this is a monitoring dashboard of the load test itself and the success rate of your business processes. You will need to build another dashboard to monitor your system under load.

Cost

The age old question of “how much does it cost” might be ringing through your head right now. Lambda cost is based on two things: amount of executions and GB/s consumed. This means that the cost is directly impacted by the amount of time it takes to run your collections.

Let’s take an example.

If you run a load test of 100,000 collections and it takes an average of 30 seconds to run at the configured 128MB of memory. Then that load test cost could be calculated by the following equation (in us-east-1 using ARM architecture):

(100,000 / 1M) x $.20 + (30,000ms x $.0000000017 x 100,000) = $5.12

So it would only cost about 5 dollars to run a load test that ran through 100,000 business processes in your system. Not too shabby.

If you consider the free tier in Lambda which includes 1 million free invocations and 400,000 GB/seconds a month, the cost drops down to $.00442 - which means it runs for free!

The beauty of a serverless load testing solution is that it only costs you money when it’s running. So if you deploy the solution to your AWS account and use it once, you only pay for the one time it ran. There are no licenses. There are no provisioned costs. Pay as you go.

With the free tier, you really just get one free run a month with the scenario described above. But if your tests take less time to run or if the load is significantly less than 100,000 runs, you could run it multiple times for free.

Of course, if your system is also serverless, you will be paying for the executions of your application to run. But we’re just analyzing the cost of the load test runner itself.

Conclusion

If you are looking for a mechanism to load test your application, look no further. You can deploy load test app from GitHub to your AWS account and hit the ground running.

Building your business processes as Postman collections benefits you more than just the load test. You can use the collections for proactive monitoring when you’re done to make sure your system remains in a healthy state.

Serverless has a wide range of use cases, and who knew that load testing could be one of them! It’s a fast, scalable, cheap way to stress test your system.

Happy coding!

Join the Ready, Set, Cloud Picks of the Week

Thank you for subscribing! Check your inbox to confirm.

View past issues. | Read the latest posts.